Backtesting has the purpose to determine an algo trading strategy's profit expectancy in live trading. For this, the strategy is usually simulated with a couple years of historical price data. However, a simple backtest will often not produce a result that is representative for live trading. Aside from overfitting and other sorts of bias, backtest results can differ from live trading in various ways that should be taken into account. Before going live, the final backtest should be verifies with a Reality Check (see below). Testing a strategy is not a trivial task.

For quickly testing a strategy, select the script and click [Test]. Depending on the strategy, a test can be finished in less than a second, or it can run for several minutes on complex portfolio strategies. If the test requires strategy parameters, capital allocation factors, or machine-learned trade rules, they must be generated in a [Train] run before. Otherwise Zorro will complain with an error message that the parameters or factors file or the rules code was not found.

If the script needs to be recompiled, or if it contains any global or static variables that must be reset, click [Edit] before [Test]. This also resets the sliders to their default values. Otherwise the static and global variables and the sliders keep their settings from the previous test run.

If the test needs historical price data that is not found, you'll get an error message. Price data can be downloaded from your broker, from Internet price providers, or from the Zorro download page. It is stored in the History folder in the way described under Price History. If there is no price data for all years in the test period, you can substitute the missing years manually with files of length 0 to prevent the error message. No trades take then place during those years.

All test results - performance report, log file, trade list, chart - are stored in the Log folder. If the folder is cluttered with too many old log files and chart images, every 60 days a dialog will pop up and propose to automatically delete files that are older than 30 days. The minimum number of days can be set up in the Zorro.ini configuration file.

The test simulates a real broker account with a given leverage, spread, rollover, commission, and other asset parameters. By default, a microlot account with 100:1 leverage is simulated. If your account is very different, get your actual account data from the broker API as described under Download, or enter it manually. Spread and rollover costs can have a large influence on backtest results. Since spread changes from minute to minute and rollover from day to day, make sure that they are up to date before backtesting. Look up the current values from the broker and enter them in the asset list. Slippage, spread, rollover, commission, or pip cost can alternatively be set up or calculated in the script. If the NFA flag is set, either directly in the script or through the NFA parameter of the selected account, the simulation runs in NFA mode.

The test is not a real historical simulation. It rather simulates trades as if they were entered today, but with a historical price curve. For a real historical simulation, the spread, pip costs, rollovers and other asset and account parameters had to be changed during the simulation according to their historical values. This can be done by script when the data is available, but it is normally not recommended for strategy testing because it would add artifacts to the results.

The test runs through one or many sample cycles, either with the full historical price data set, or with out-of-sample subsets. It generates a number of equity curves that are then used for a Monte Carlo Analysis of the strategy. The optional Walk Forward Analysis applies different parameter sets for staying always out-of-sample. Several switches (see Mode) affect test and data selection. During the test, the progress bar indicates the current position within the test period. The lengths of its green and red area display the current gross win and gross loss of the strategy. The result window below shows some information about the current profit situation, in the following form:

3: +8192 +256 214/299

| 3: | Current oversampling cycle (if any). |

| +8192 | Current balance. |

| +256 | Current value of open trades. |

| 214 | Number of winning trades so far. |

| /299 | Number of losing trades so far. |

After the test, a performance report and - if the LOGFILE flag was set - a log file is generated in the Log folder. If [Result] is clicked or the PLOTNOW flag was set, the trades and equity chart is displayed in the chart viewer. are displayed. The message window displays the annual return (if any) in percent of the required capital, and the average profit/loss per trade in pips. Due to different pip costs of the assets, a portfolio strategy can have a negative average pip return even when the annual return is positive, or vice versa.

The content of the message window can be copied to the clipboard by double clicking on it. The following performance figures are displayed (for details see performance report):

| Median AR | Annual return by Monte Carlo analysis at 50% confidence level. |

| Profit | Total profit of the system in units of the account currency. |

| MI | Average monthly income of the system in units of the account currency. |

| DD | Maximum balance-equity drawdown. |

| Capital | Required initial capital. |

| Trades | Number of trades in the backtest period. |

| Win | Percentage of winning trades. |

| Avg | Average profit/loss of a trade in pips. |

| Bars | Average number of bars of a trade. Fewer bars mean less exposure to risk. |

| PF | Profit factor, gross win divided by gross loss. |

| SR | Sharpe ratio. Should be > 1 for good strategies. |

| UI | Ulcer index, the average drawdown percentage. Should be < 10% for ulcer prevention. |

| R2 | Determination coefficient, the equity curve linearity. Should be ideally close to 1. |

| AR | Annual return per margin and drawdown, for non-reinvesting systems with leverage. |

| ROI | Annual return on investment, for non-reinvesting systems without leverage. |

| CAGR | Compound annual growth rate, for reinvesting systems. |

For replaying or visually debugging a script bar by bar, click [Replay] or [Step] on the chart window.

When the LOGFILE flag is set, a backtest generates the following files for examining the trade behavior or for further evaluation:

The messages in the log file are described under Log. The other file formats are described under Export. The optional asset name is appended when the script does not call asset, but uses the asset selected with the scrollbox. When LogNumber is set, the number is appended to the script name, which allows comparing logs from the current and previous backtests. When [Result] is clicked, the chart image and the plot.csv are generated again for the asset selected from the scrollbox. The chart is opened in the chart viewer and the log and performance report are opened in the editor.

The required price history resolution, and whether using TICKS mode or not, depends on the strategy to be tested. As a rule of thumb, the duration of the shortest trade should cover at least 20 ticks in the historical data. For long-term systems, such as options trading or portfolio rebalancing, daily data as provided from STOOQ™ or Quandl™ is normally sufficient. Day trading strategies need historical data with 1-minute resolution (M1 data, .t6 files). If entry or exit limits are used and the trades have only a duration of a few bars, TICKS mode is mandatory. Scalping strategies that open or close trades in minutes require high resolution price history - normally T1 data in .t1 or .t2 files. For backtesting high frequency trading systems that must react in microseconds, you'll need order book or BBO (Best Bid and Offer) price quotes with exchange time stamps, available from data vendors in CSV or NxCore tape format. An example of how to test a HFT system can be found on Financial Hacker. For testing with T1, BBO, or NxCore data, Zorro S is required.

The file formats are described in the Price History chapter. In T1 data, any tick represents a price quote. In M1 data, a tick represents one minute. Since the price tick resolution of .t1 and .t6 files is not fixed, .t1 files can theoretically contain M1 data and .t6 files can contain T1 data - but normally it's the other way around. Since many price quotes can arrive in a single second, T1 data usually contains a lot more price ticks than M1 data. Using T1 data can have a strong effect on the backtest realism, especially when the strategy uses short time frames or small price differences. Trade management functions (TMFs) are called more often, the tick function is executed more often, and trade entry and exit conditions are simulated with higher precision.

For using T1 historical price data, set the History string in the script to the ending of the T1 files (for instance History = "*.t1"). Zorro will then load its price history from .t1 files. Make sure that you have downloaded the required files before, either with the Download script, or from the Zorro download page. Special streaming data formats such as NxCore can be directly read by script with the priceQuote function. An example can be found under Fill mode.

Naturally, backtests take longer and require more memory with higher data resolution and longer test periods. Memory limits can restrict the number of assets and backtest years especially with .t1 or .t2 backtests in TICKS mode. For reducing the resolution of T1 data and thus the memory requirement and backtest time, increase TickTime. Differences of T1 backtests and M1 backtests can also arise from the different composition of M1 ticks on the broker's price server, causing different trade entry and exit prices. For instance, a trade at 15:00:01 would be entered at the first price quote after 15:00:01 with T1 data, but at the close of the 15:00:00 candle with M1 data. If this difference is relevant for your strategy, test it with T1 data. The TickFix variable can shift historical ticks forward or backward in time and test their effect on the result.

Zorro backtests are as close to real trading as possible, especially when the TICKS flag is set, which causes trade functions and stop, profit, or entry limits to be evaluated at every price tick in the historical data. Theoretically a backtest generates the same trades and thus returns the same profit or loss as live trading during the same time period. In practice there can and often will be deviations, some intentional and desired, some unwanted. Differences of backtest and live trading are caused by the following effects:

The likeliness that the strategy exploits real inefficiencies depends on in which way it was developed and optimized. There are many factors that can cause bias in the test result. Curve fitting bias affects all strategies that use the same price data set for test and training. It generates largely too optimistic results and the illusion that a strategy is profitable when it isn't. Peeking bias is caused by letting knowledge of the future affect the trade algorithm. An example is calculating trade volume with capital allocation factors (OptimalF) that are generated from the whole test data set (always test at first with fixed lot sizes!).

Data mining bias (or selection bias) is caused not only by data mining, but already by the mere act of developing a strategy with historical price data, since you will selecting the most profitable algorithm or asset dependent on test results. Trend bias affects all 'asymmetric' strategies that use different algorithms, parameters, or capital allocation factors for long and short trades. For preventing this, detrend the trade signals or the trade results. Granularity bias is a consequence of different price data resolution in test and in real trading. For reducing it, use the TICKS flag, especially when a trade management function is used. Sample size bias is the effect of the test period length on the results. Values derived from maxima and minima - such as drawdown - are usually proportional to the square root of the number of trades. This generates more pessimistic results on large test periods.

Before going live, always verify backtest results with a Reality Check. It can detect many source of bias. For details please read Why 90% of Backtests Fail. Zorro comes with scripts for two tests:

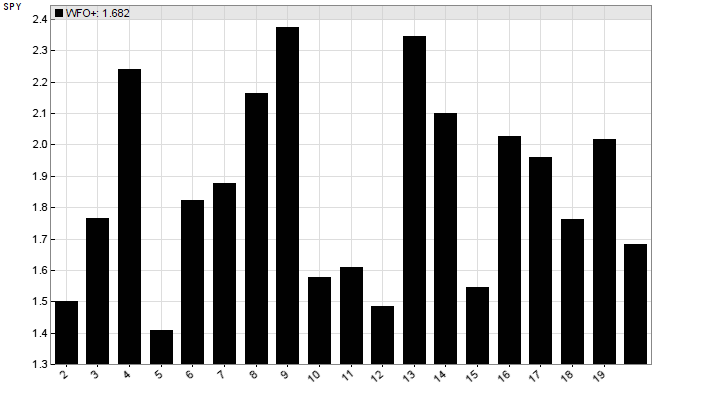

A WFO profile determines the dependence of the strategy on the WFO cycles number or length. It should be used for any walk-forward optimized trading system. For this, open the WFOProfile.c script, and enter the name of your strategy script in the marked line. In your script, uncomment the NumWFOCycles or WFOPeriod setting and - it it's a portfolio - disable all components except the component you want to test, and disable reinvesting. Then start WFOProfile.c in [Train] mode. If the resulting profile shows large random result differences as in the image below, something is likely wrong with that trading system.

Suspicious WFO profile. Don't trade this live.

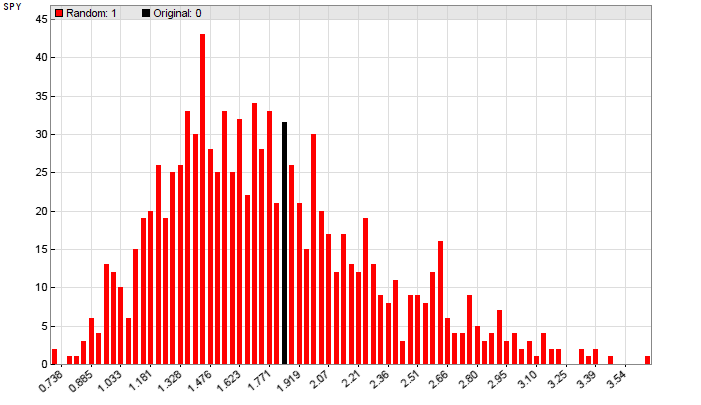

The Montecarlo Reality Check determines whether a positive backtest result was caused by a real edge of the trading system, or just by over-training or randomness. Open the MRC.c script and enter the name of your strategy script in the marked line. In your script - it it's a portfolio - disable all components except the component you want to test, and disable reinvesting. Then start MRC.c in [Train] mode for trained strategies, or in [Test] mode when the strategy has no trained models, rules, or parameters. At the end you'll get histogram and a p-value. It gives the probability that the backtest result is untrustworthy. The p-value should be well below 5%-10% for a valid backtest.

Lucky backtest result (black line). Don't trade this

live, either.

► latest version online